Spice v2.0-rc.2 (Apr 10, 2026)

Announcing the release of Spice v2.0-rc.2! 🔥

v2.0.0-rc.2 is the second release candidate for advanced testing of v2.0, building on v2.0.0-rc.1.

Highlights in this release candidate include:

- Distributed Spice Cayenne Query and Write Improvements with data-local query routing and partition-aware write-through

- DataFusion v52.4.0 Upgrade with aligned

arrow-rs,datafusion-federation, anddatafusion-table-providers - MERGE INTO for Spice Cayenne catalog tables with distributed support across executors

PARTITION BYSupport for Cayenne enabling SQL-defined partitioning inCREATE TABLEstatements- ADBC Data Connector & Catalog with full query federation, BigQuery support, and schema/table discovery

- Databricks Lakehouse Federation Improvements with improved reliability, resilience, DESCRIBE TABLE fallback, and source-native type parsing

- Delta Lake Column Mapping supporting Name and Id mapping modes

- HTTP Pagination support for paginated API endpoints in the HTTP data connector

- New Catalog Connectors for PostgreSQL, MySQL, MSSQL, and Snowflake

- JSON Ingestion Improvements with single-object support,

soda(Socrata Open Data) format support,json_pointerextraction, and auto-detection - Per-Model Rate-Limited AI UDF Execution for controlling concurrent AI function invocations

- Dependency upgrades including Turso v0.5.3, iceberg-rust v0.9, and Vortex improvements

What's New in v2.0.0-rc.2

Distributed Cayenne Query and Write Improvements

Distributed query for Cayenne-backed tables now has better partition awareness for both reads and writes.

Key improvements:

- Data-Local Query Routing: Cayenne catalog queries can now be routed to executors that hold the relevant partitions, improving distributed query efficiency.

- Partition-Aware Write Through: Scheduler-side Flight

DoPutingestion now splits partitioned Cayenne writes and forwards them to the responsible executors instead of routing through a single raw-forward path. - Dynamic Partition Assignment: Newly observed partitions can be added and assigned atomically as data arrives, with persisted partition metadata for future routing.

- Better Cluster Coordination: Partition management is now separated for accelerated and federated tables, improving routing behavior for distributed Cayenne catalog workloads.

- Distributed UPDATE/DELETE DML: UPDATE and DELETE statements for Cayenne catalog tables are now forwarded to all executors in distributed mode, with all executors required to succeed.

- Distributed

runtime.task_history: Task history is now replicated across the distributed cluster for observability. - RefreshDataset Control Stream: Dataset refresh operations are now distributed via the control stream to executors.

- Executor DDL Sync: When an executor connects, it receives DDL for all existing tables, ensuring late-joining executors have full table state.

MERGE INTO for Spice Cayenne

Spice now supports MERGE INTO statements for Cayenne catalog tables, enabling upsert-style data operations with full distributed support.

Key improvements:

- MERGE INTO Support: Execute

MERGE INTOstatements against Cayenne catalog tables for combined insert/update/delete operations. - Distributed MERGE: MERGE operations are automatically distributed across executors in cluster mode.

- Data Safety: Duplicate source keys are detected and prevented to avoid data loss during MERGE operations.

- Chunked Delete Filters: Large MERGE delete filter lists are chunked to prevent stack overflow with Vortex IN-list expressions.

PARTITION BY Support for Cayenne

SQL Partition Management: Spice now supports PARTITION BY for Cayenne-backed CREATE TABLE statements, enabling partition definitions to be expressed directly in SQL and persisted in the Cayenne catalog.

Key improvements:

- SQL Partition Definition: Define Cayenne table partitioning directly in SQL using

CREATE TABLE ... PARTITION BY (...). - Partition Validation: Partition expressions are parsed and validated during DDL analysis before table creation.

- Persisted Partition Metadata: Partition metadata is stored in the Cayenne catalog and can be reloaded by the runtime after restart.

- Distributed DDL Support: Partition metadata is forwarded when

CREATE TABLEis distributed to executors in cluster mode. - Improved Type Support: Partition utilities now support newer string scalar variants such as

Utf8View.

Example:

CREATE TABLE events (id INT, region TEXT, ts TIMESTAMP) PARTITION BY (region)

Catalog Connector Enhancements

Spice now includes additional catalog connectors for major database systems, improving schema discovery and federation workflows across external data systems.

Key improvements:

- New Catalog Connectors: Added catalog connectors for PostgreSQL, MySQL, MSSQL, and Snowflake.

- Schema and Table Discovery: Connectors use native metadata catalogs such as

information_schema/INFORMATION_SCHEMAto discover schemas and tables. - Improved Federation Workflows: These connectors make it easier to expose external database metadata through Spice for cross-system federation scenarios.

- PostgreSQL Partitioned Tables: Fixed schema discovery for PostgreSQL partitioned tables.

Example PostgreSQL catalog configuration:

catalogs:

- from: pg

name: pg

include:

- 'public.*'

params:

pg_host: localhost

pg_port: 5432

pg_user: postgres

pg_pass: ${secrets:POSTGRES_PASSWORD}

pg_db: my_database

pg_sslmode: disable

JSON Ingestion Improvements

JSON ingestion is now more flexible and robust.

Key improvements:

- More JSON Formats: Added support for single-object JSON documents, auto-detected JSON formats, and Socrata SODA responses.

json_pointerExtraction: Extract nested payloads before schema inference and reading using RFC 6901 JSON Pointer syntax.- Better Auto-Detection: JSON format detection now handles arrays, objects, JSONL, and BOM-prefixed input more reliably, including single multi-line objects.

- SODA Support: Added schema extraction and data conversion for Socrata Open Data API responses.

- Broader Compatibility: Improved handling for BOM-prefixed files, CRLF-delimited JSONL, nested payloads, mixed structures, and wrapped documents.

Example using json_pointer to extract nested data from an API response:

datasets:

- from: https://api.example.com/v1/data

name: users

params:

json_pointer: /data/users

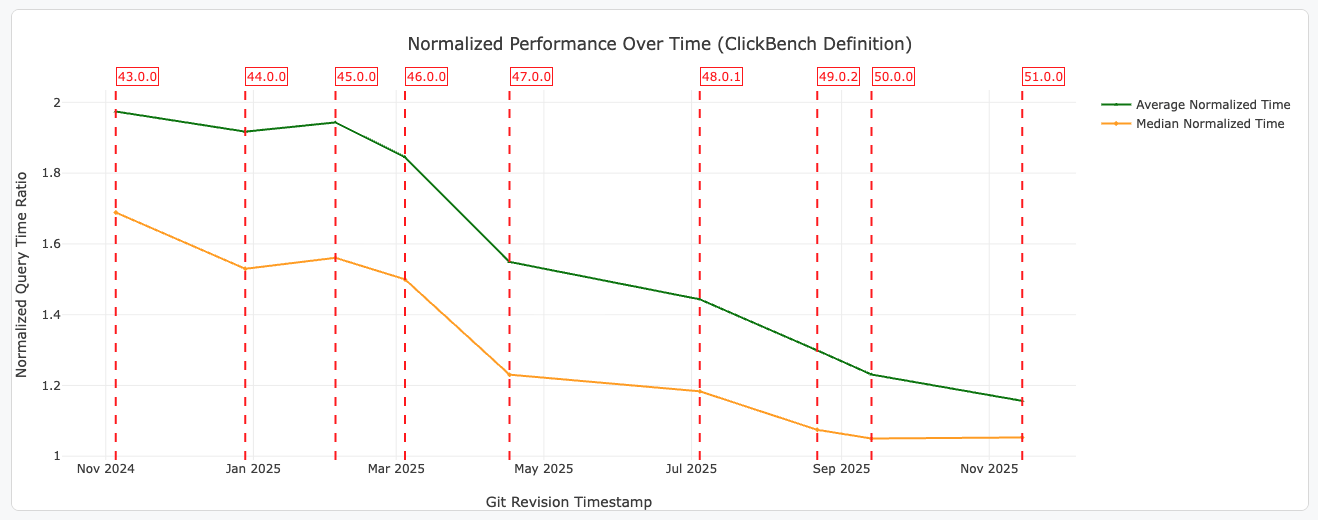

DataFusion v52.4.0 Upgrade

Apache DataFusion has been upgraded from v52.2.0 to v52.4.0, with aligned updates across arrow-rs, datafusion-federation, and datafusion-table-providers.

Key improvements:

- DataFusion v52.4.0: Brings the latest fixes and compatibility improvements across query planning and execution.

- Strict Overflow Handling:

try_cast_tonow uses strict cast to return errors on overflow instead of silently producing NULL values. - Federation Fix: Fixed SQL unparsing for Inexact filter pushdown with aliases.

- Partial Aggregation Optimization: Improved partial aggregation performance for

FlightSQLExec.

Dependency Upgrades

| Dependency | Version / Update |

|---|---|

| Turso (libsql) | v0.5.3 (from v0.4.4) |

| iceberg-rust | v0.9 |

| Vortex | Map type support, stack-safe IN-lists |

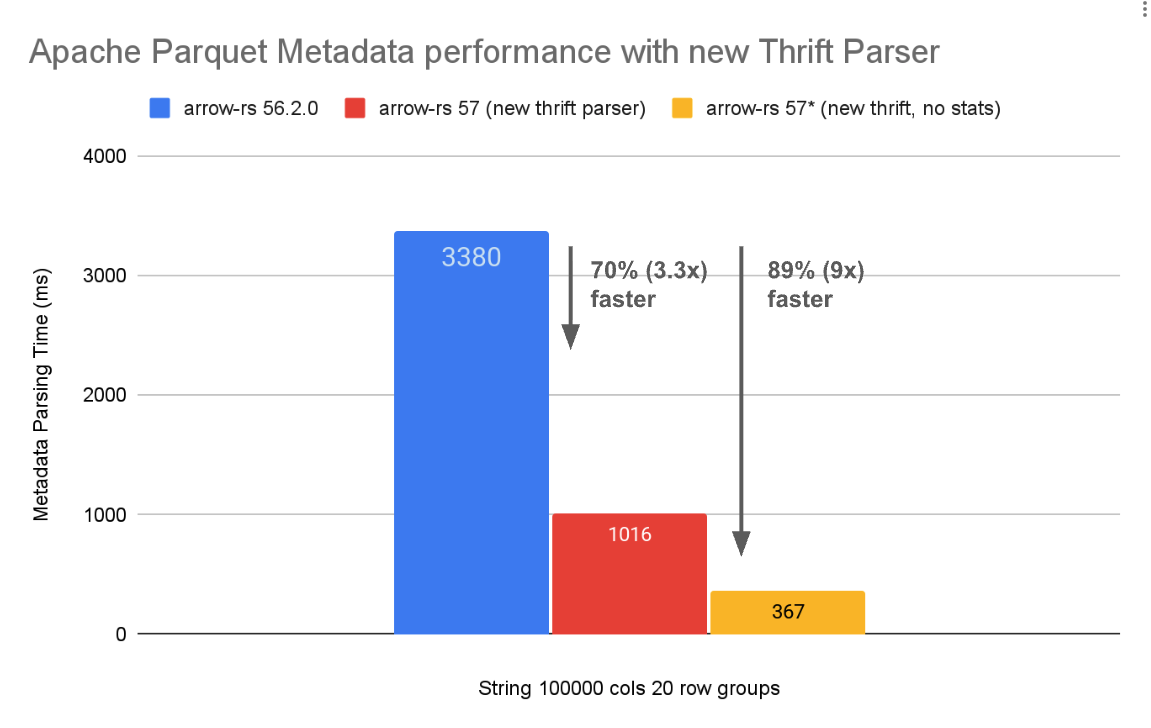

| arrow-rs | Arrow v57.2.0 |

| datafusion-federation | Updated for DataFusion v52.4.0 alignment |

| datafusion-table-providers | Updated for DataFusion v52.4.0 alignment |

| datafusion-ballista | Bumped to fix BatchCoalescer schema mismatch panic |

Other Improvements

-

Cayenne released as RC: Cayenne data accelerator is now promoted to release candidate status.

-

File Update Acceleration Mode: Added

mode: file_updateacceleration mode for file-based data refresh. -

spice completionsCommand: New CLI command for generating shell completion scripts, with auto-detection of shell directory. -

--endpointFlag: Added--endpointflag tospice runwith scheme-based routing for custom endpoints. -

mTLS Client Auth: Added mTLS client authentication support to the

spice sqlREPL. -

DynamoDB DML: Implemented DML (INSERT, UPDATE, DELETE) support for the DynamoDB table provider.

-

Caching Retention: Added retention policies for cached query results.

-

GraphQL Custom Auth Headers: Added custom authorization header support for the GraphQL connector.

-

ClickHouse Date32 Support: Added

Date32type support for the ClickHouse connector. -

AWS IAM Role Source: Added

iam_role_sourceparameter for fine-grained AWS credential configuration. -

S3 Metadata Columns: Metadata columns renamed to

_location,_last_modified,_sizefor consistency, with more robust handling in projected queries. -

S3 URL Style: Added

s3_url_styleparameter for S3 connector URL addressing (path-style vs virtual-hosted). Useful for S3-compatible stores like MinIO:params:

s3_endpoint: https://minio.local:9000

s3_url_style: path -

S3 Parquet Performance: Improved S3 parquet read performance.

-

HTTP Caching: Transient HTTP error responses such as

429and5xxare no longer cached, preventing stale error payloads from being served from cache. -

HTTP Connector Metadata: Added

response_headersas structured map data for HTTP datasets. -

Views

on_zero_results: Accelerated views now supporton_zero_results: use_sourceto fall back to the source when no results are found:views:

- name: sales_summary

sql: |

SELECT region, SUM(amount) as total

FROM sales

GROUP BY region

acceleration:

enabled: true

on_zero_results: use_source -

Flight

DoPutIngestion Metrics: Addedrows_writtenandbytes_writtenmetrics for FlightDoPut/ ADBC ETL ingestion. -

EXPLAIN ANALYZE Metrics: Added metrics for EXPLAIN ANALYZE in FlightSQLExec.

-

Scheduler Executor Metrics: Added

scheduler_active_executors_countmetric for monitoring active executors. -

Query Memory Limit: Updated default query memory limit from 70% to 90%, with

GreedyMemoryPoolfor improved memory management. -

MetastoreTransaction Support: Added transaction support to prevent concurrent metastore transaction conflicts.

-

Iceberg REST Catalog: Coerce unsupported Arrow types to Iceberg v2 equivalents in the REST catalog API.

-

CDC Cache Invalidation: Improved cache invalidation for CDC-backed datasets.

-

Spice.ai Connector Alignment: Parameter names aligned across catalog and data connectors for Spice.ai Cloud.

-

Cayenne File Size: Cayenne now correctly respects the configured target file size (defaults to 128MB).

-

Cayenne Primary Keys: Properly set

primary_keys/on_conflictfor Cayenne tables. -

Turso Metastore Performance: Cached metastore connections and prepared statements for improved Turso and SQLite metastore performance.

-

Turso SQL Robustness: More robust SQL unparsing and date comparison handling for Turso.

-

Dictionary Type Normalization: Normalize Arrow Dictionary types for DuckDB and SQLite acceleration.

-

GitHub Connector Resilience: Improved GraphQL client resilience, performance, and ref filter handling.

-

ODBC Fix: Fixed ODBC queries silently returning 0 rows on query failure.

-

Anthropic Fixes: Fixed compatibility issues with Anthropic model provider.

-

v1/responses API Fix: The

/v1/responsesAPI now correctly preserves client instructions whensystem_promptis set. -

Shared Acceleration Snapshots: Show an error when snapshots are enabled on a shared acceleration file.

-

Distributed Mode Error Handling: Improved error handling for distributed mode and

state_locationconfiguration. -

Helm Chart: Added support for ServiceAccount annotations and AWS IRSA example.

-

Perplexity Removed: Removed Perplexity model provider support.

-

Rust v1.93.1: Upgraded Rust toolchain to v1.93.1.

Contributors

Breaking Changes

- S3 metadata columns renamed: S3 metadata columns renamed from

location,last_modified,sizeto_location,_last_modified,_size. v1/evalsAPI removed: The/v1/evalsendpoint has been removed.- Perplexity removed: Perplexity model provider support has been removed.

- Default query memory limit changed: Default query memory limit increased from 70% to 90%.

Upgrading

To upgrade to v2.0.0-rc.2, use one of the following methods:

CLI:

spice upgrade v2.0.0-rc.2

Homebrew:

brew upgrade spiceai/spiceai/spice

Docker:

Pull the spiceai/spiceai:2.0.0-rc.2 image:

docker pull spiceai/spiceai:2.0.0-rc.2

For available tags, see DockerHub.

Helm:

helm repo update

helm upgrade spiceai spiceai/spiceai --version 2.0.0-rc.2

AWS Marketplace:

Spice is available in the AWS Marketplace.

What's Changed

Changelog

- ci: fix E2E CLI upgrade test to use latest release for spiced download by @phillipleblanc in #9613

- fix(DF): Lazily initialize BatchCoalescer in RepartitionExec to avoid schema type mismatch by @sgrebnov in #9623

- feat: Implement catalog connectors for various databases by @lukekim in #9509

- Refactor and clean up code across multiple crates by @lukekim in #9620

- fix: Improve error handling for distributed mode and state_location configuration by @lukekim in #9611

- Properly install postgres in

install-postgresaction by @krinart in #9629 - fix: Use Python venv for schema validation in CI by @phillipleblanc in #9637

- Update spicepod.schema.json by @app/github-actions in #9640

- Update testoperator dispatch to use release/2.0 branch by @phillipleblanc in #9641

- fix: Align CUDA asset names in Dockerfile and install tests with build output by @phillipleblanc in #9639

- Fix expect test scripts in E2E Installation AI test by @sgrebnov in #9643

- testoperator for partitioned arrow accelerator by @Jeadie in #9635

- Remove default 1s refresh_check_interval from spidapter for hive datasets by @phillipleblanc in #9645

- Fix scheduler panic and cancel race condition by @phillipleblanc in #9644

- Align Spice.ai connector parameter names across catalog/data connectors by @lukekim in #9632

- docs: update distribution details and add NAS support in release notes by @lukekim in #9650

- Enable

postgres-accelin CI builds for benchmarks by @sgrebnov in #9649 - perf: Cache Turso metastore connection across operations by @penberg in #9646

- Add 'scheduler_state_location' to spidapter by @Jeadie in #9655

- Implement Cayenne S3 Express multi-zone live test with data validation by @lukekim in #9631

- chore(spidapter): bump default memory limit from 8Gi to 32Gi by @phillipleblanc in #9661

- perf: Use prepare_cached() in Turso and SQLite metastore backends by @penberg in #9662

- Improve CDC cache invalidation by @krinart in #9651

- Refactor Cayenne IDs to use UUIDv7 strings by @lukekim in #9667

- fix: add liveness check for dead executors in partition routing by @Jeadie in #9657

- fix(s3): Fix metadata column schema mismatches in projected queries by @sgrebnov in #9664

- s3_metadata_columns tests: include test for location outside table prefix by @sgrebnov in #9676

- docs: Update DuckDB, GCS, Git connector and Cayenne documentation by @lukekim in #9671

- Add s3_url_style support for S3 connector URL addressing by @phillipleblanc in #9642

- Consolidate E2E workflows and require WSL for Windows runtime by @lukekim in #9660

- Upgrade to Rust v1.93.1 by @lukekim in #9669

- Security fixes and improvements by @lukekim in #9666

- feat(flight): add DoPut rows/bytes written metrics for DoPut ETL ingestion tracking by @phillipleblanc in #9663

- Skip caching http error response + add

response_headersby @krinart in #9670 - refactor: Remove

v1/evalsfunctionality by @Jeadie in #9420 - Make a test harness for Distributed Spice integration tests by @Jeadie in #9615

- Enable

on_zero_results: use_sourcefor views by @krinart in #9699 - fix(spidapter): Lower memory limit, passthrough AWS secrets, override flight URL by @peasee in #9704

- Show an error on a shared acceleration file with snapshots enabled by @krinart in #9698

- Fixes for anthropic by @Jeadie in #9707

- Use

max_partitions_per_executorinallocate_initial_partitionsby @Jeadie in #9659 - [SpiceDQ] Accelerations must have partition key by @Jeadie in #9711

- Upgrade to Turso v0.5 by @lukekim in #9628

- feat: Rename metadata columns to _location, _last_modified, _size by @phillipleblanc in #9712

- fix: bump datafusion-ballista to fix BatchCoalescer schema mismatch panic by @phillipleblanc in #9716

- fix: Ensure Cayenne respects target file size by @peasee in #9730

- refactor: Make DDL preprocessing generic from Iceberg DDL processing by @peasee in #9731

- [SpiceDQ] Distribute query of Cayenne Catalog to executors with data by @Jeadie in #9727

- Properly set

primary_keys/on_conflictfor Cayenne tables by @krinart in #9739 - Add executor resource and replica support to cloud app config by @ewgenius in #9734

- feat: Support PARTITION BY in Cayenne Catalog table creation by @peasee in #9741

- Update datafusion and related packages to version 52.3.0 by @lukekim in #9708

- Route FlightSQL statement updates through QueryBuilder by @phillipleblanc in #9754

- JSON file format improvements by @lukekim in #9743

- [SpiceDQ] Partition Cayenne catalogs writes through to executors by @Jeadie in #9737

- Update to DF v52.3.0 versions of datafusion & datafusion-tableproviders by @lukekim in #9756

- Make S3 metadata column handling more robust by @sgrebnov in #9762

- Fetch API keys from dedicated endpoint instead of apps response by @phillipleblanc in #9767

- Update arrow-rs, datafusion-federation, and datafusion-table-providers dependencies by @phillipleblanc in #9769

- Chunk metastore batch inserts to respect SQLite parameter limits by @phillipleblanc in #9770

- Improve JSON SODA support by @lukekim in #9795

- Add ADBC Data Connector by @lukekim in #9723

- docs: Release Cayenne as RC by @peasee in #9766

- cli[feat]: cloud mode to use region-specific endpoints by @lukekim in #9803

- Include updated JSON formats in HTTPS connector by @lukekim in #9800

- Flight DoPut: Partition-aware write-through forwarding by @Jeadie in #9759

- Pass through authentication to ADBC connector by @lukekim in #9801

- Move scheduler_state_location from adapter metadata to env var by @phillipleblanc in #9802

- Fix Cayenne DoPut upsert returning stale data after 3+ writes by @phillipleblanc in #9806

- Fix JSON column projection producing schema mismatch by @sgrebnov in #9811

- Fix http connector by @krinart in #9818

- Fix ADBC Connector build and test by @lukekim in #9813

- Support update & delete DML for distributed cayenne catalog by @Jeadie in #9805

- Set allow_http param when S3 endpoint uses http scheme by @phillipleblanc in #9834

- fix: Cayenne Catalog DDL requires a connected executor in distributed mode by @Jeadie in #9838

- fix: Add conditional put support for file:// scheduler state location by @Jeadie in #9842

- fix: Require the DDL primary key contain the partition key by @Jeadie in #9844

- fix: Databricks SQL Warehouse schema retrieval with INLINE disposition and async retry by @lukekim in #9846

- Filter pushdown improvements for SqlTable by @lukekim in #9852

- feat: add iam_role_source parameter for AWS credential configuration by @lukekim in #9854

- Fix ODBC queries silently returning 0 rows on query failure by @lukekim in #9864

- feat(adbc): Add ADBC catalog connector with schema/table discovery by @lukekim in #9865

- Make Turso SQL unparsing more robust and fix date comparisons by @lukekim in #9871

- Fix Flight/FlightSQL filter precedence and mutable query consistency by @lukekim in #9876

- Partial Aggregation optimisation for

FlightSQLExecby @lukekim in #9882 - fix: v1/responses API preserves client instructions when system_prompt is set by @Jeadie in #9884

- feat: emit

scheduler_active_executors_countand use it in spidapter by @Jeadie in #9885 - feat: Add custom auth header support for GraphQL connector by @krinart in #9899

- Add --endpoint flag to spice run with scheme-based routing by @lukekim in #9903

- When executor connects, send DDL for existing tables by @Jeadie in #9904

- fix: Improve ADBC driver shutdown handling and error classification by @lukekim in #9905

- fix: require all executors to succeed for distributed DML (DELETE/UPDATE) forwarding by @Jeadie in #9908

- fix(cayenne catalog): fix catalog refresh race condition causing duplicate primary keys by @Jeadie in #9909

- Remove Perplexity support by @Jeadie in #9910

- Fix refresh_sql support for debezium constraints by @krinart in #9912

- Implement DML for DynamoDBTableProvider by @lukekim in #9915

- chore: Update iceberg-rust fork to v0.9 by @lukekim in #9917

- Run physical optimizer on

FallbackOnZeroResultsScanExecfallback plan by @sgrebnov in #9927 - Improve Databricks error message when dataset has no columns by @sgrebnov in #9928

- Delta Lake: fix data skipping for >= timestamp predicates by @sgrebnov in #9932

- fix: Ensure distributed Cayenne DML inserts are forwarded to executors by @Jeadie in #9948

- Add full query federation support for ADBC data connector by @lukekim in #9953

- Make time_format deserialization case-insensitive by @vyershov in #9955

- Hash ADBC join-pushdown context to prevent credential leaks in EXPLAIN plans by @lukekim in #9956

- fix: Normalize Arrow Dictionary types for DuckDB and SQLite acceleration by @sgrebnov in #9959

- ADBC BigQuery: Improve BigQuery dialect date/time and interval SQL generation by @lukekim in #9967

- Make

BigQueryDialectmore robust and add BigQuery TPC-H benchmark support by @lukekim in #9969 - fix: Show proper unauthorized error instead of misleading runtime unavailable by @lukekim in #9972

- fix: Enforce target_chunk_size as hard maximum in chunking by @lukekim in #9973

- Add caching retention by @krinart in #9984

- fix: improve Databricks schema error detection and messages by @lukekim in #9987

- fix: Set default S3 region for opendal operator and fix cayenne nextest by @phillipleblanc in #9995

- fix(PostgreSQL): fix schema discovery for PostgreSQL partitioned tables by @sgrebnov in #9997

- fix: Defer cache size check until after encoding for compressed results by @krinart in #10001

- fix: Rewrite numeric BETWEEN to CAST(AS REAL) for Turso by @lukekim in #10003

- fix: Handle integer time columns in append refresh for all accelerators by @sgrebnov in #10004

- fix: preserve s3a:// scheme when building OpenDalStorageFactory with custom endpoint by @phillipleblanc in #10006

- Fix ISO8601 time_format with Vortex/Cayenne append refresh by @sgrebnov in #10009

- fix: Address data correctness bugs found in audit by @sgrebnov in #10015

- fix(federation): fix SQL unparsing for Inexact filter pushdown with alias by @lukekim in #10017

- Improve GitHub connector ref handling and resilience by @lukekim in #10023

- feat: Add spice completions command for shell completion generation by @lukekim in #10024

- fix: Fix data correctness bugs in DynamoDB decimal conversion and GraphQL pagination by @sgrebnov in #10054

- Implement RefreshDataset for distributed control stream by @Jeadie in #10055

- perf: Improve S3 parquet read performance by @sgrebnov in #10064

- fix: Prevent write-through stalls and preserve PartitionTableProvider during catalog refresh by @Jeadie in #10066

- feat:

spice completionsauto-detects shell directory and writes file by @lukekim in #10068 - fix: Bug in DynamoDB, GraphQL, and ISO8601 refresh data handling by @sgrebnov in #10063

- fix partial aggregation deduplication on string checking by @lukekim in #10078

- fix: add MetastoreTransaction support to prevent concurrent transaction conflicts by @phillipleblanc in #10080

- fix: Use GreedyMemoryPool, add spidapter query memory limit arg by @phillipleblanc in #10082

- feat: Add metrics for EXPLAIN ANALYZE in FlightSQLExec by @lukekim in #10084

- Use strict cast in

try_cast_toto error on overflow instead of silent NULL by @sgrebnov in #10104 - feat: Implement MERGE INTO for Cayenne catalog tables by @peasee in #10105

- feat: Add distributed MERGE INTO support for Cayenne catalog tables by @peasee in #10106

- Improve JSON format auto-detection for single multi-line objects by @lukekim in #10107

- Add mode: file_update acceleration mode by @krinart in #10108

- Coerce unsupported Arrow types to Iceberg v2 equivalents in REST catalog API by @peasee in #10109

- fix: Update default query memory limit to 90% from 70% by @phillipleblanc in #10112

- feat: Add mTLS client auth support to spice sql REPL by @lukekim in #10113

- fix(datafusion-federation): report error on overflow instead of silent NULL by @sgrebnov in #10124

- fix: Prevent data loss in MERGE when source has duplicate keys by @peasee in #10126

- feat: Add ClickHouse Date32 type support by @sgrebnov in #10132

- Add Delta Lake column mapping support (Name/Id modes) by @sgrebnov in #10134

- fix: Restore Turso numeric BETWEEN rewrite lost in DML revert by @lukekim in #10139

- fix: Enable arm64 Linux builds with fp16 and lld workarounds by @lukekim in #10142

- fix: remove double trailing slash in Unity Catalog storage locations by @sgrebnov in #10147

- fix: Improve GitHub GraphQL client resilience and performance by @lukekim in #10151

- Enable reqwest compression and optimize HTTP client settings by @lukekim in #10154

- fix: executor startup failures by @Jeadie in #10155

- feat: Distributed runtime.task_history support by @Jeadie in #10156

- fix: Preserve timestamp timezone in DDL forwarding to executors by @peasee in #10159

- feat: Per-model rate-limited concurrent AI UDF execution by @Jeadie in #10160

- fix(Turso): Reject subquery/outer-ref filter pushdown in Turso provider by @lukekim in #10174

- Fix linux/macos

spice upgradeby @phillipleblanc in #10194 - Improve CREATE TABLE LIKE error messages, success output, EXPLAIN, and validation by @peasee in #10203

- fix: chunk MERGE delete filters and update Vortex for stack-safe IN-lists by @peasee in #10207

- Propagate

runtime.params.parquet_page_indexto Delta Lake connector by @sgrebnov in #10209 - Properly mark dataset as Ready on Scheduler by @Jeadie in #10215

- fix: handle Utf8View/LargeUtf8 in GitHub connector ref filters by @lukekim in #10217

- fix(databricks): Fix schema introspection and timestamp overflow by @lukekim in #10226

- fix(databricks): Fix schema introspection failures for non-Unity-Catalog environments by @lukekim in #10227

- feat: Add pagination support to HTTP data connector by @lukekim in #10228

- feat(databricks): DESCRIBE TABLE fallback and source-native type parsing for Lakehouse Federation by @lukekim in #10229

- fix(databricks): harden HTTP retries, compression, and token refresh by @lukekim in #10232

- feat[helm chart]: Add support for ServiceAccount annotations and AWS IRSA example by @peasee in #9833

- fix: Log warning and fall back gracefully on Cayenne config change by @krinart in #9092

- fix: Handle engine mismatch gracefully in snapshot fallback loop by @krinart in #9187

Full Changelog: https://github.com/spiceai/spiceai/compare/v2.0.0-rc.1...v2.0.0-rc.2